No furnace operator intentionally wastes alloy.

Yet across steel plants worldwide, alloy consumption steadily escalates. Not because recipes are incorrect. Not because metallurgists lack discipline. But because recovery behaviour is uncertain, and in steel manufacturing process optimization, this uncertainty compels defensive decisions.

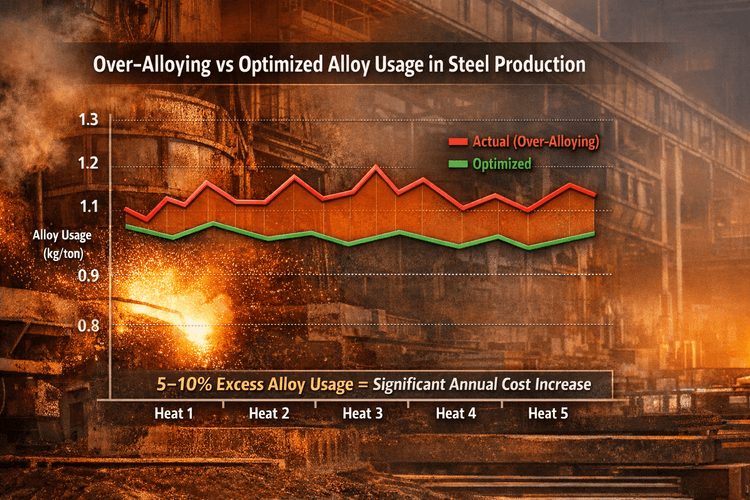

When chemistry must fall within tight specification windows, the penalty for undershooting is immediate: re-sampling, reheating, production delays, and quality risk. The penalty for overshooting is diffuse and delayed, impacting production cost and operational efficiency.

Faced with that imbalance, operators choose safety.

They add margin.

That margin, multiplied across thousands of heats per year, quietly compounds into millions of dollars, directly affecting manufacturing cost optimization.

Over-alloying is not an operational failure. It is a rational response to unpredictable recovery within the broader context of manufacturing process optimization in steel plants.

Alloy recovery inside an electric arc furnace (EAF) or secondary metallurgy stage is not a fixed coefficient. It is the result of competing thermodynamic and kinetic mechanisms unfolding in real time, making it a critical factor in steel manufacturing process optimization.

When an alloy is introduced into the bath, multiple pathways compete:

Recovery behaviour is influenced by:

These relationships are nonlinear and directly impact alloy recovery in steelmaking and overall process stability.

A marginal increase in FeO can disproportionately reduce manganese recovery.

A minor shift in temperature can alter aluminium yield efficiency.

Holding time variations influence oxidation kinetics in measurable ways.

Static recovery assumptions cannot capture this complexity, making process optimization in steel industry essential for improving efficiency and reducing variability.

Most plants operate on historical averages:

But averages conceal variance.

If manganese recovery fluctuates between 78 and 88 percent across similar heats, the statistical average offers little protection against deviation risk.

Operators understand this implicitly.

When targeting a narrow chemistry band — for example 0.90 to 0.95 percent Mn — an unexpected recovery drop may push the heat below specification.

The consequences are immediate:

To avoid this cascade, operators add surplus alloy. What appears as inefficiency is, in reality, risk insurance.

While the incremental alloy addition per heat may appear negligible, the aggregate impact on steel plant costs and efficiency is material.

Across mid-to-large integrated steel plants, baseline analysis commonly reveals:

For a plant producing one million tons annually, even modest alloy recovery optimization translates into multi-million-dollar annual savings.

But the deeper cost is systemic instability in steel production.

Recovery variance drives:

The issue is not alloy expenditure alone. It is decision volatility at the furnace — the first and most critical economic lever in steel manufacturing.

Traditional steelmaking recipe tables assume:

Real furnaces do not operate under such static conditions.

Oxidation potential shifts. Slag chemistry evolves. Scrap variability introduces process noise. Furnace lining degradation alters thermal behaviour.

Static recipes shift the burden of uncertainty to the operator.

Without contextual recovery modelling in steel plants, uncertainty remains unmanaged, leading to inefficiencies in alloy usage and process control.

Reducing over-alloying in steelmaking requires reducing variance at the moment of decision.

A dynamic recovery intelligence layer models alloy recovery as a function of real-time furnace conditions, rather than relying on historical averages.

Technically, this involves:

Instead of applying static assumptions such as:

Mn recovery = 85 percent

Mn recovery = f (temperature, slag condition, oxygen activity, furnace state, historical patterns)

Critically, it provides not only a predicted recovery value but also a confidence interval, enabling better decision-making.

If predicted recovery is 84 percent ± 1.5 percent, safety margins can be reduced with confidence. If uncertainty remains wide, margin remains necessary.

The objective is not shifting averages — it is narrowing prediction variance in alloy recovery.

When prediction variance contracts, safety margins shrink without increasing metallurgical risk, leading to improved cost efficiency and process stability in steel plants.

In many plants, recovery behaviour is managed by experienced metallurgists whose intuition reflects years of pattern recognition.

Dynamic modelling formalizes that intuition:

This approach does not displace expertise.

It institutionalizes it.

Instead of depending on a handful of experts, the plant embeds metallurgical intelligence into its decision infrastructure.

The furnace is the origin point of cost per ton.

Chemistry instability propagates downstream:

Reducing recovery uncertainty at the furnace stabilizes the entire integrated steel plant.

Alloy optimization is not a furnace-only initiative. It is a structural cost and stability lever across production.

Over-alloying is not a performance issue.

It is an uncertainty problem in steelmaking.

When recovery is unpredictable, safety margins in alloy addition are justified.

When recovery becomes predictable, cost per ton declines structurally — not temporarily.

The steel plants that lead will not be those that demand tighter discipline.

They will be those that reduce uncertainty at the point of metallurgical decision-making.

Heat by heat.

Model by model.

Decision by decision.